var links = document.querySelectorAll('a') įor (var i = links. If you want to extract the external URLs only, then this is the code you need to use. var urls = document.querySelectorAll('a') Ĭonsole.log(urls.href) Extract External URLs OnlyĮxternal Links are the ones that point outside the current domain.

If you are using Chrome or Firefox use the following code for a styled version of the same.ĭemo of extracting links from Wikipedia page using dev console var urls = document.querySelectorAll('a') Ĭonsole.log("%c#"+url+" > %c"+urls.innerHTML +" > %c"+urls.href,"color:red ","color:green ","color:blue ") Īnd if you want to extract just the links without the anchor text, then use the following code. } Extract URLs + Corresponding Anchor Text – Styled Output (For Chrome & Firefox) var urls = document.querySelectorAll('a') Ĭonsole.log("#"+url+" > "+urls.innerHTML +" > "+urls.href) The following is a cross-browser supported code for extracting URLs along with their anchor text. Copy the code, paste it into the console and hit enter. The JavaScript snippets to extract links are given below. I can’t stress enough how useful that is! To open the console on Chrome, press Cmd + Shift + i on Mac and Ctrl + Shift + i on Windows.

Extract Websites From Google/Yahoo/Bing/Text Content/Text File. You can write JavaScript code and inject it into the current page to do all sorts of fancy things. Use free Website Extractor online tool for collecting websites from Google/Yahoo/Bing/text content/text file. The browser console is an excellent tool to test and debug things. Two other techniques to extract links from page are also shared here for people who don’t want to get their hands dirty with code □. If you are impressed with this, do learn some JavaScript as it comes very handy.

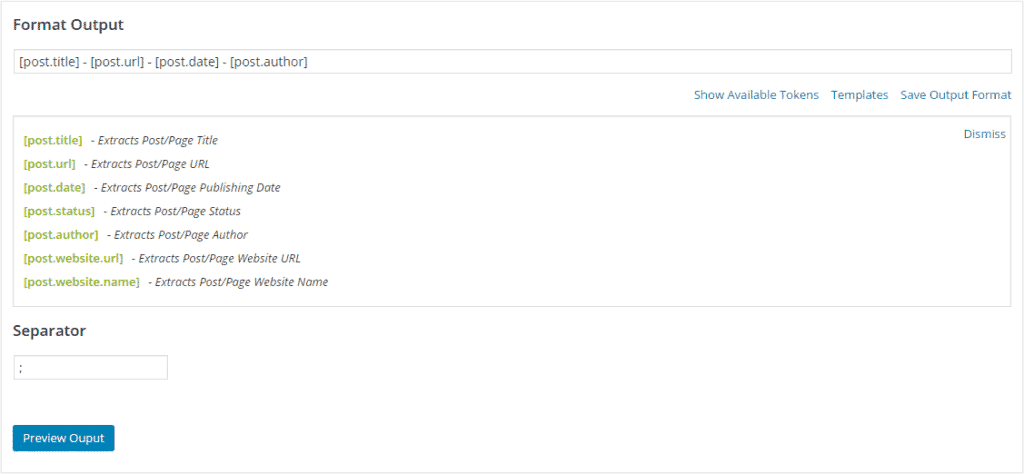

This article serves as a short demonstration of how you can use browser developer consoles to scrape data from the web page. What do you do when you want to export all or specific links from a webpage? Copying them one after another is monotonous and useless especially when you can automate it with a line of JavaScript code. Extracting URLs using Dev Tools console.If you have additional comments, questions, or suggestions (relevant advertising or sponsorship inquiries are welcome), you may contact us at info ~. Users who abuse the tool may be temporarily or permanently denied access to the Site. We may collect general data (the number of submitted URLs or IP addresses) for statistical purposes and to improve our Site. The tool is 100% anonymous and secure to ensure privacy, data protection, legal compliance, and system stability, we don't permanently collect or store submitted URLs (except for the ones saved via the link). Other than that, feel free to share your successful story about URLtoDOMAIN with other web professionals! TERMS OF USE AND PRIVACY POLICY If you use this tool and encounter an unexpected issue (for example, a submitted URL isn't parsed into a valid domain or subdomain name), you may use the Report link to let us know about the problem. We'd like to thank all customers who support our efforts. This tool works great! How may I repay the favor? No Competition, No Conflict of Interests - we are not a SEO / data-mining company we do not store, share, sell, or analyze your links How does this link grabber work How can our link finder help you Find all external links. No Tracking - no third-party cookies, no external Javascript, no embedded applications Legally Protected - service based in the U.S. Powerful - executes thousands of URLs at a time supports different protocols and hostnames extracts/trims URLs searches Secure - SSL data transmission and data masking submitted data automatically and permanently removed The following table explains the most important reasons for choosing URLtoDOMAIN (UTD) over our competition: If you are a professional working on data collected by yourself, your associates, or your clients, it may be vitally important to protect this data from theft, unauthorized use, and malicious activities. It is the fact that links often contain identifiable or sensitive information. Why should I pay extra to use your tool without limits? There may be similar domain extracting and create-a-disavow-file services available. All options, except for the ones from the OTHER OPTIONS section, will be ignored. The Website URLs Extractor API allows developers to extract links from a target URL and provides linking metadata such as the type of link, anchor text. There is no validation for wrong format or improper characters in the results (all input from textarea is included). It is possible to use only one string search operation at a time. String search operation MUST be included in the first line of textarea. Search Operators: #=contains: - it will find and process all lines not ending with the searched string

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed